The world of modern business floats on a sea of data, and organizations’ information on their customers, products, and partners is becoming a recognized source of insight. However, as valuable as this data is, it’s often scattered across several locations and legacy systems, making it almost impossible to analyze effectively.

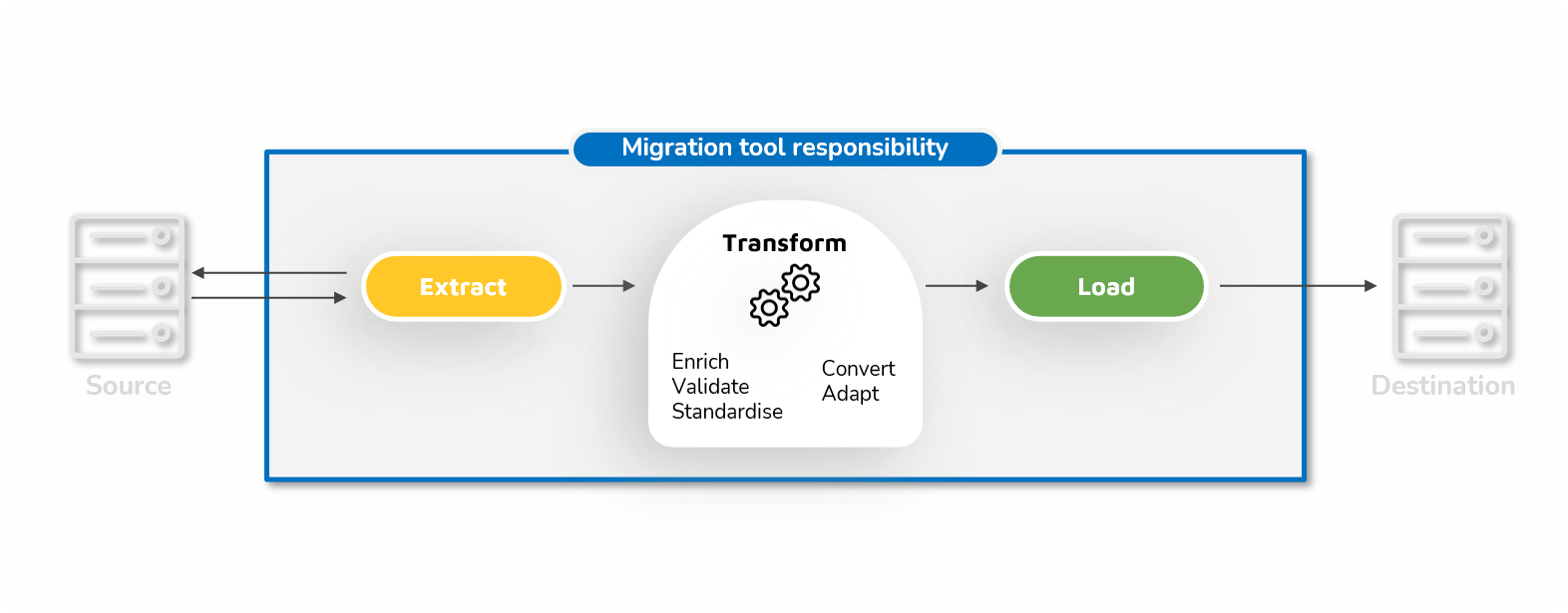

Turning this data from an abstract, purely theoretical source of information into a tangible pathway to insights requires it to be centralized and standardized. To do this, the data must be extracted from its original location, transformed into a useful format, and then loaded into a single target system.

Embarking on this extract, transform, and load (ETL) process can be daunting – especially for organizations with large stores of data still in legacy systems and with operations spanning multiple sites. However, organizations can simplify many complexities in the process by using a specialized ETL tool rather than attempting to handle it manually.

What is an ETL tool?

In short, ETL tools are specialized applications designed to migrate large volumes of data from various sources into a single target system and in a single context.

As the name suggests, ETL tools work in three distinct phases. First, they extract and combine the data from one or several source systems, which could range from legacy databases to modern analytics programs.

Next comes the data transformation phase, where the tool applies a series of rules to the extracted data. The aim of this phase is to ensure that the finished data is easily accessible and usable, and compliant with the destination prerequisites. Depending on your requirements, this can mean standardizing formatting, cleaning out any inconsistencies, or cutting out redundant or duplicated data.

Finally, the ETL tool loads the transformed data into the final target. This target can be any data store, from a simple delimited flat file to a data warehouse. While this final phase may seem simple, modern tools work to optimize what would otherwise be a lengthy, resource-hungry loading process.

The Benefits of an ETL Tool

In theory, organizations can handle the ETL process without using tools. It’s possible to hand-code each step using existing resources and complete many tasks manually.

However, these custom processes can often be highly fragile and difficult to update or modify if any part of the data pipeline changes. They can also struggle with the volume of data that many organizations produce. With a Seagate report predicting that enterprise data stores will grow at more than 40% per year, this issue looks to increase.

Simply put, there are many benefits to using a specialized ETL tool:

Cutting Through Complexity

An organization’s data stores are often made up of an incredibly diverse and complex array of formats and locations. Businesses can store information across a network of old databases, CRM systems, and spreadsheets; this complexity only increases if the organization spans multiple sites – let alone countries!

A specialized ETL tool can easily make sense of this diverse array of data. Automated processes can simplify and sort a tangled mess of systems into something that analysts can easily digest.

Saving Time

Accessing data scattered across several locations and lacking formal structure can be time-consuming. This delay can quickly become a bottleneck in an organization’s data pipeline, delaying projects and causing valuable insights to arrive too late to be helpful.

An ETL tool can allow quick and easy access to valuable data. This immediate access can eliminate a retrieval bottleneck and enable analysts to uncover insights rapidly.

Reducing errors

As much as we try to avoid them, we must accept that typos, misclicks, and misunderstandings are inevitable in any manual process. Even a slight slip-up with data processing can cascade downstream to cause real issues with the final figures your team is working with and, therefore, their insights.

An intelligent ETL tool can virtually eliminate these kinds of errors, both by automating data processing and watching for inconsistencies between sources. This makes the final data much more valuable.

Improving Intelligence

Ultimately, the goal of any ETL tool is to ensure that an organization can easily access its data and rely on the insights it provides. It does this by breaking down the information silos that invariably develop when teams are split across tools, sites, and systems and ensuring data is stored in just a single location. Armed with this information, an organization can make data-driven decisions that impact everything from staffing levels to sales targets. According to Accenture, some 90% of business strategies rely on data as a crucial success factor, and with a good ETL tool to hand, they can ensure that these strategies are the right ones.

Which Tool to Pick?

Of course, the process of picking an ETL tool isn’t a simple one. There are several varieties of ETL tools out there, each with its own ideal use case.

Beyond manual processing and hand-coding solutions, there are three main varieties of ETL tools: Batch processing, real-time, and cloud-based.

Batch processing tools operate just as the name suggests, extracting relatively small batches of data at a time before transforming and loading them into their destination. These batches are usually handled within a specific time window, ensuring that the ETL process doesn’t consume too many resources.

This type of processing can be useful if time isn’t a pressing concern or if an organization can operate on a fixed schedule – for example, generating data during the day and exporting it at night. However, this means it can be relatively slow, and if an analyst needs to access data that hasn’t been added to a batch, they may struggle to find it. Real-time processing avoids this issue by handling data as quickly as possible. The ETL tool scales up or down to meet current demand, ensuring that analysts always have access to the latest data.

While this can generate impressive results, real-time processing can be inefficient. It’s doubtful that every piece of data an organization generates needs to be processed rapidly, and the resources spent tackling the ETL process could potentially be better spent elsewhere.

Finally, cloud-based migration solutions represent the most modern approach to ETL. These tools are deployed on the cloud and offer a flexible, agile approach to data management, and as they do not rely on local systems, they can be easily scaled up or down.

Depending on requirements, these tools can either be hosted on the cloud or used as part of an organization’s cloud infrastructure, allowing information to flow from legacy systems onto cloud-based data warehouses.

Managing a Smooth Migration

The volume and complexity of an organization’s data can make migration seem daunting. With the right ETL tool to hand, however, even the most tangled web of systems can soon be transformed into a valuable source of intelligence.

Whether you’re looking to migrate valuable data from legacy systems or consolidate intelligence on a modern ECM, reach out to Fast2 today and see which tool works best for you.